What is this? This newsletter aims to track information disorder largely from an Indian perspective. It will also look at some global campaigns and research.

What this is not? A fact-check newsletter. There are organisations like Altnews, Boomlive, etc. who already do some great work. It may feature some of their fact-checks periodically.

Welcome to Edition 33 of MisDisMal-Information

Regulating the ‘Internet’ This Week

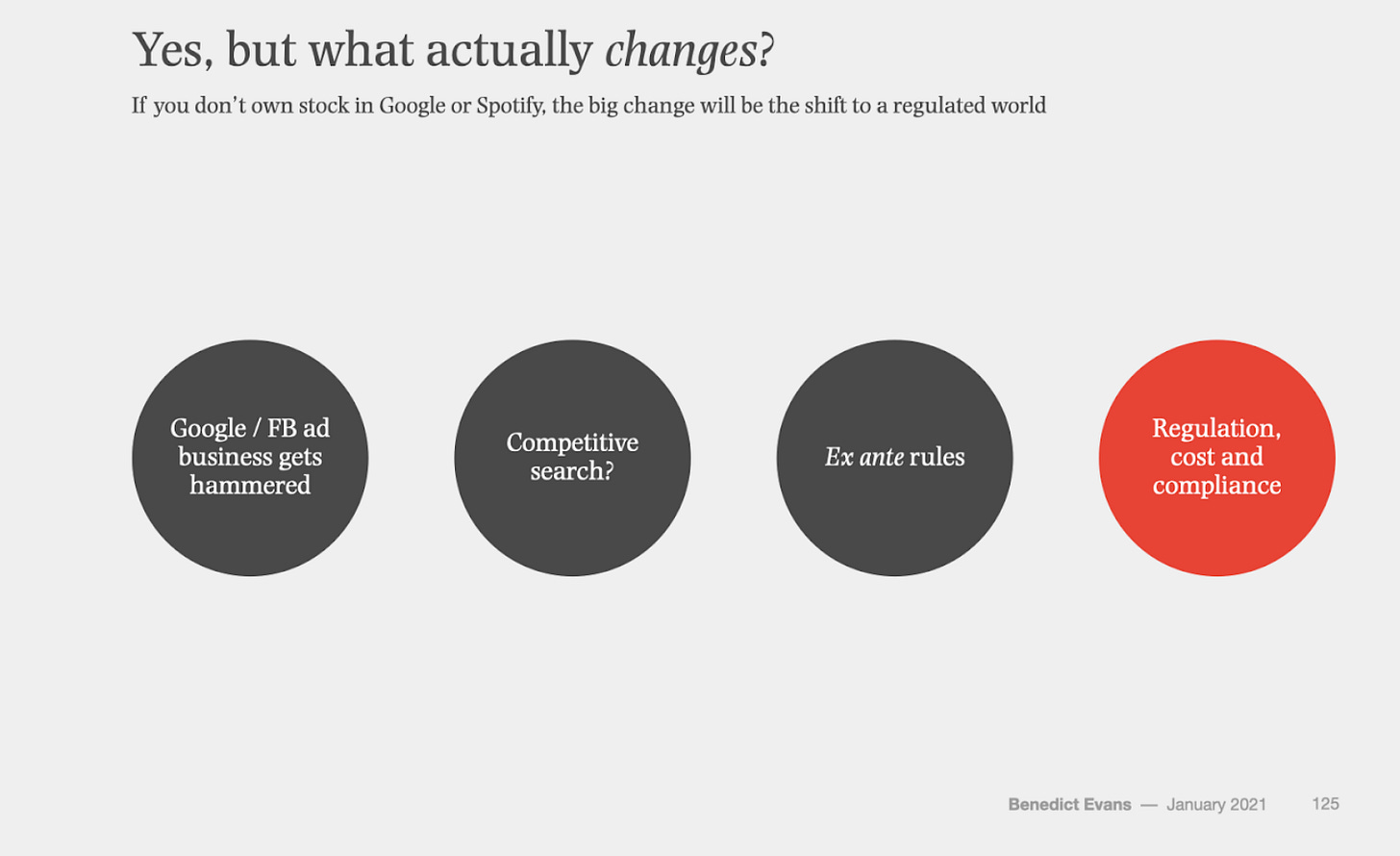

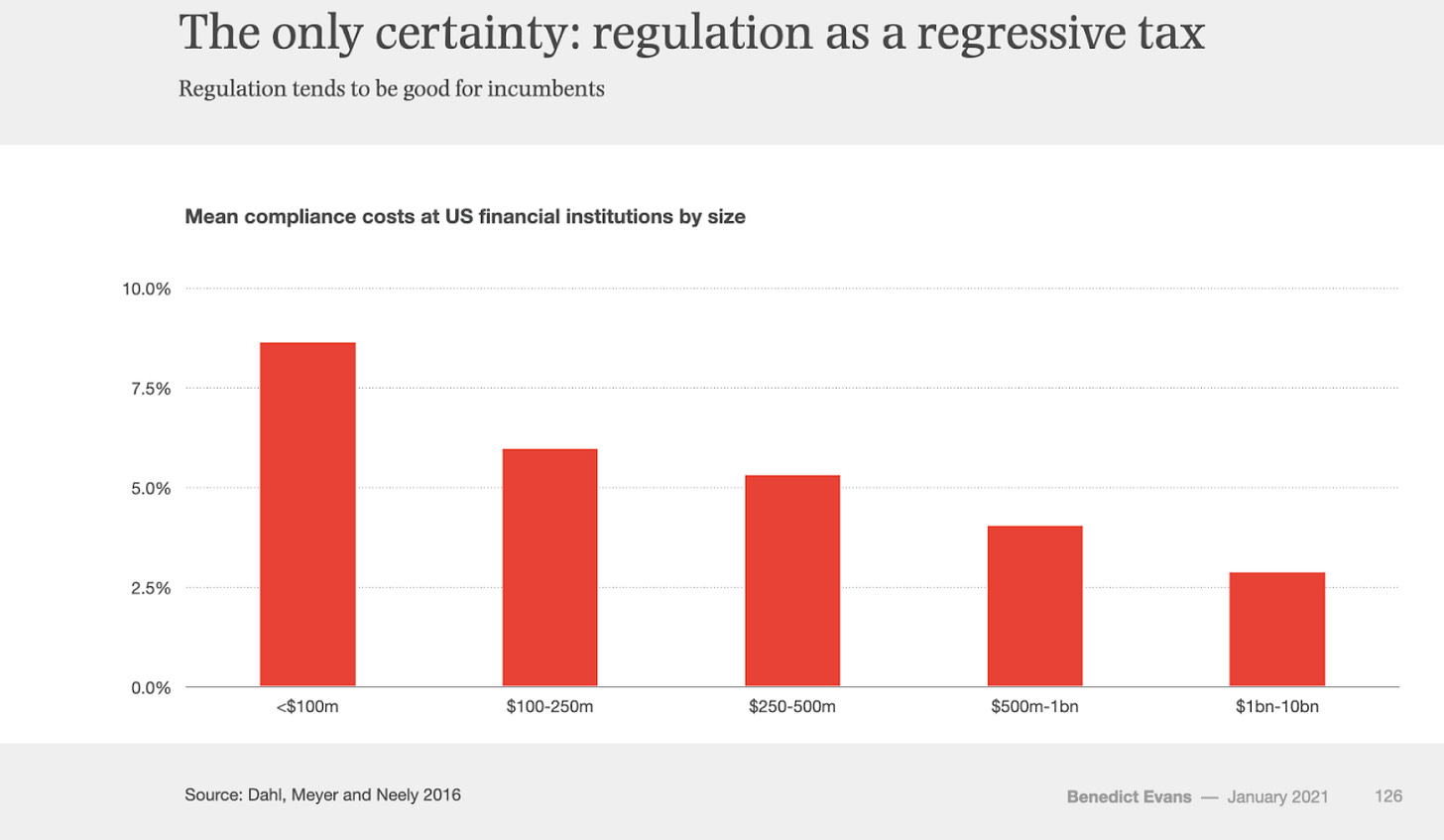

In the latest edition of his yearly presentation on tech industry trends, Benedict Evans touched upon the topic of regulating the internet. The premise appeared to be (in the absence of talk-track) that a wave of tech which makes a significant impact gets regulated. He goes on to state that regulation acts as a ‘regressive tax’ tends to favour incumbents.

And in an episode of the Tech Dirt podcast aptly titled ‘Regulating The Internet Won’t Fix a Broken Government’ Heather Burns (from the Open Rights Group) makes a similar point while explaining UK’s Online Harms framework and how seeks to impose a ‘duty of care’ on an expansive set of use-cases on the internet [at about 28 minutes in, she says that the framework implies that ‘there is no such thing as society, there is only content moderation’]. Another point that she makes is that when proposals seek to regulate the ‘internet’ they are typically aimed at a handful of platforms (though I think that applies to lot of industries that are ultimately regulated).

Francis Fukuyama has proposed a middleware solution to the problem of a handful of platforms and their impact on political discourse. From a December episode of the Capitalisn’t podcast:

Middleware is software that sits on top of the existing platforms and basically serves as an interface between the user and a platform.

..

The heavy version would have the middleware actually be the gateway to the platform’s content. So, the platform essentially becomes a dumb pipe that simply provides information,

…

The light version is where the platforms continue to do what they do. They serve up the content they want to serve up. But the middleware behaves like Twitter is behaving now. They tag things. They say, “This is controversial,” or, “This does not check out,” or, “Please go to this website for further information about this point.”

While Jack Dorsey has spoken of a marketplace for algorithms [The Verge], companies are pretty secretive about how algorithmic curation works [Kaveh Wadell - Consumer Reports].

And in a piece arguing that disinformation is threatening evidence-based policymaking, Gill Savage notes:

However, action by governments isn’t always needed, particularly if traditional news media are able to challenge disinformation.

AllTechisHuman published a report titled ‘Improving Social Media’ which highlights the overlapping challenges

There is no perfect solution: a movement toward greater content moderation also brings forward accusations of overreach, lack of transparency, and uncomfortable questions of concentrated power in unelected officials. To lessen this moderation, however, may increase a polluted information ecosystem that strains civil discourse and democracy. A push toward more private communication is desirable for privacy advocates and political dissidents—but also radical movements and the sharing of abusive content that thrives in secrecy. Moving toward a blockchain future may offer greater individual ownership of data, but its lack of centralization may also weaken the ability to maintain a vibrant and safe environment.

The report contains interviews with ~40 people and they were asked a range of questions including what they felt the costs of doing nothing were. In most of the cases where this question was actually published, interviewees suggested that doing nothing was not an option [note, the doing nothing is not a reference to regulation but referred to taking steps to address the shortcomings of social media].

So let’s take a quick tour to see what was being suggested this week.

A live list of the ‘most popular misinformation content’ with a citizen run jury providing oversight.[Tauel Harper - The Conversation]

A national register of misinformation sources and content (same as 1).

A suggestion similar to 1 was also made to House of Lords Committee [ComputerWeekly]

“We need real-time information on suspected misinformation from the internet companies, not as the government is [currently] proposing in the Online Safety Bill,” said Moy, adding that Ofcom should be granted similar powers to the Financial Conduct Authority to demand information from businesses that fall under its remit.

Sun-ha Hong, makes the case for why transparency isn’t the silver bullet it can often be made out to be - especially in a polluted information ecosystem [CIGI].

Too often, transparency ends up a form of free labour, where we are burdened with dis- or misinformation but deprived of the capacity for meaningful corrective action. What results is a form of neo-liberal “responsibilization,” in which the public becomes burdened with duties it cannot possibly fulfill: to read every terms of service, understand every complex case of algorithmic harm, fact-check every piece of news.

In an interview with TOI, Sanjay Hegde suggested an independent regulator for content takedown on social media.

And Jillian York argues that content moderation by itself won’t stop the spread of disinformation [The Conversationlist].

the ways in which certain types of disinformation are prioritized for debunking, while others are allowed (often for nationalistic or propagandistic reasons) to flourish should serve to illustrate why our current dialogue around tackling mis- and disinformation—and particularly its emphasis on combating these ills with technology and censorship—is set to fail. As a society, we must become more comfortable with admitting that we don’t always have the answer;

She concludes:

If lawmakers are serious about combating disinformation, then they should start looking inside classrooms and churches. They should follow the money trail and look a bit harder at why our democratic systems are failing. And most importantly, they should step away from technosolutionism and stop viewing it as anything but what it is: A stopgap measure.

None of this is to say, we shouldn’t be working to address the problems that the internet has created. The underlying point that fixing the underlying problems that have been exposed and exacerbated will not follow automatically - we are fooling ourselves if we think those will go away with a snap and click.

The worrying themes of climate memes

Cameron Oglesby writes about politically charged memes, especially around climate change [Grist]:

Picture this: You’re feeling Zoom-fatigued after a long, stressful day of remote work. Looking for something funny to offset a difficult day, you pick up your phone and open up the meme-sharing app iFunny. But instead of being met with a playful pet video or a witty ATLA reference, you’re bombarded with post after post bashing Joe Biden, Greta Thunberg, and the Green New Deal.

Those politically-charged iFunny memes aren’t just a blip. The Russian-owned meme-sharing site, which has an estimated 10 million monthly active users, has received criticism in the past for its heavily conservative, at times racist, and occasionally pro-violence posts. But especially since Joe Biden was elected, there looks to have been a surge in user-generated content taking aim at left-leaning climate policy.

I had touched on the weaponisation of memes in Edition 2 (What me-me worry) and the risks of ‘just a joke’ content in Edition 21.

During a recent interview on CBSN, Sara Fischer from Axio highlighted some of the challenges:

So let me give you a good example. If you were to post the word poison on your Facebook, a machine might not necessarily say that's a bad thing. If you were to post a picture of a vaccine, you know, like a needle, I don't know that a computer would necessarily say that's a bad thing either.

But if you put the word poison on top of a picture of a needle, obviously, you and I know that changes the meaning. However, computers can't pick up on that context the same way that a five-year-old or any other person could.

While multi-modal detection is getting better, it is still far from being capable to detect this content which is easily tweaked and shared again.

But, wait, we were talking about the climate weren’t we?

A little over a week ago, Facebook expanded its program to counter climate mis/disinformation [Ben Geman - Axios]

Facebook Thursday morning unveiled several changes to the Climate Science Information Center it first launched in September. The platform steers users to the site when they search for climate-related terms. Changes and additions include...

Making it available to Facebook users in Belgium, Brazil, Canada, India, Indonesia, Ireland, Mexico, the Netherlands, Nigeria, Spain, South Africa, and Taiwan. It initially launched in the U.S., U.K., Germany and France.

Where it's not available, Facebook is directing users to the UN Environment Programme.

I hate to be cynical, but I can already see the reporting cycle around this. Let me reuse Jane Lytvynenko’s tweet from an earlier edition, in the context of vaccine mis/disinformation.

Of course, I should add there will also be instances of this policy affecting climate advocacy groups. Twitter seems to be ahead on that front based on its no political ads policy. Emily Atkin writes on MSNBC:

Twitter banned all political ads in 2019, in part as a response to the Trump campaign's misinformation ahead of the presidential election. The effect, however, was that everyone was banned from promoting tweets about political issues — even climate change. And now, as recently as Tuesday, environmental groups have publicly affirmed that they can't pay to spread tweets fact-checking oil companies. Their tweets would be considered prohibited "political content."

But points out other interest groups were able to run climate-themed ads [Heated]

At least two ads from the American Petroleum Institute (API), the oil industry’s largest trade group, opposed “restricting development” on “federal lands and waters.” The ads ran on January 26, the day before Biden released a slew of executive actions to address the climate crisis—including one to restrict new fossil fuel development on federal lands and waters. (The administration called that day “Climate Day.”)

So, while Facebook looking to act against climate mis/disinformation is a good thing, I want to go back the point I made about structural issues earlier. Benjamin Franta published an academic article on ‘oil industry disinformation on global warming’.[tandfonline, Jan 2021]

A newly discovered archival document shows the American Petroleum Institute was promulgating false and misleading information about climate change in 1980, nearly a decade earlier than previously known, in order to promote public policies favorable to the fossil fuel industry. This finding demonstrates early use of public-facing disinformation about global warming by the petroleum industry and suggests commercial fossil fuel interests played a more obstructive role in climate change discourse and policy throughout the 1980s than previously understood.

During the recent power blackouts in Texas, there were attempts to link it to renewable sources of energy[NYTimes]. Ketan Joshi appears to have traced the narrative surrounding a meme about Germany to SkyNews Australia and then posits that a Google itself could become a source of climate mis/disinformation as a result of the deal with NewsCorp. A story in FT (paywall) 2 weeks ago also points to the role of Rupert Murdoch and even quotes 2 former Australian Prime Ministers:

Murdoch probably does shape rightwing views on climate. In an Ipsos Mori survey of 20 countries in 2014, the three countries with least belief in man-made climate change were his main markets of the US, Britain and Australia. British attitudes have since improved, but the US and Australia retain large fringes of climate deniers, reports YouGov.

Now, I must admit I haven’t spent years going down this particular rabbit-hole. What it does indicate is an information delivery system that isn’t working. Facebook taking down some extra posts (wait till they throw a number in millions at us) is not going to change the underlying machinations, whatever they might be.

Meanwhile in India

You’ll notice that I haven’t written extensively about the Intermediary Guidelines and Code of Ethics for Digital Media. This is because I haven’t had the opportunity to fully process it.

I did watch the press conference though, and tried to read in between the lines. 2 notable things

At 23:03, Internet Shutdowns are justified on the basis that it is a exercising the Fundamental Right of Speech and Expression can use the internet, and therefore it is subject to ‘reasonable restrictions’.

Open-ended references to Section 69A of the IT Act, referred to as ‘blocking rules’ were made a number of times.

Unsurprisingly, some Indian news publishers are hoping for regulation similar to Australia’s Media Bargaining Code.

Aside 1: Listen to this Lawfare podcast episode with Rasmus Kleis Nielsen where he makes the point that if the point of the code was just to extract money, arguably, it has succeeded on that front. On the question of benefitting journalism, well, we don’t know.

Aside 2: I should add that it isn’t just Indian publishers doing this of course. Here is Time saying that ‘Australia may have just saved journalism from big tech’.

The Indian Newspaper Society has written to Google India asking for a share of advertising revenue[FreePressJournal].

A TOI editorial asked for a ‘Fair share’. It also ran Microsoft’s statement supporting the code verbatim.

ThePrint also published an opinion piece along similar lines.

An Exchange4Media article quoted people from Mathrubhumi and Eenadu with simliar asks.

TheHindu took a softer line drawing attention to the ‘intent’ and saying that other countries could follow.

As far as I can tell, TheKen(in an edition of their newsletter Beyond The First Order) are the only ones who have come out and said that such regulation would not be beneficial. Do you know any others? Let me know!

4 People were arrested for ‘allegedly duping over 4,000 people of approximately ₹ 1.20 crore by creating fake websites for registration on Government e-Marketplace’ [NDTV]

Police said the accused paid huge sums to Google Ads to have their websites listed at the top of Google Search results.

..

It was also found that the sites were registered and hosted on foreign servers to evade detection

Host in India impending?

Cyberabad police arrested a man ‘for spreading fake news on social media that the Balanagar-Jeedimetla flyover in Hyderabad crashed, killing several people’ [TheNewsMinute]

Balanagar Inspector speaking to TNM said, "We have arrested him for criminal intimidation and spreading false news, we have identified 17 more persons who circulated the video in the same manner."

The police said that they will book cases against people who spread such misinformation which creates panic among the people.So more arrests to follow?

NewsLaundry reports ‘News site falsely accuses Kashmiri journalist of ‘working for ISI’. Zee News, IANS amplify smear’. From Newslaundry’s story

Greek City Times based its piece on a report by Disinfo Lab, which claims to unveil "fake news and propaganda that intend to create turmoil among people". Disinfo Lab said the toolkit posted by Thunberg cited a “foreign expert” as a resource person: Pieter Friedrich. The report contained the same scare phrases – “damaging Indian interests”, “Pakistani interests”, “collusion with ISI”. It described Friedrich as an “anti-Gandhi crusader”.

I think a quick call-out is in order. I do want to point out that they did run a story based on a report from the same group back in January [Pakistan’s ISPR made up a story of Khalistani hand in farmer protests. Indian media lapped it up]. A completely anonymous/unknown group did seem like a cause for concern even back then. The report took a lot of leaps of faith which were uncritically reported. I was wary then… I am wary now.

I’m all for OSINT research and totally get the need for researchers to protect their identities. But I would be very, very, very cautious when using OSINT research by a completely anonymous entity. High potential for abuse.Khalistan theory against protesting farmers was planted by Pakistan & boosted by PR wing of Pakistan’s armed forces. Once it took hold in India, RW "useful idiots" fell for it and gave it life. TV News piled on. @NidhiSuresh_ 🔥 #IndiaAgainstPropaganda https://t.co/7RTF3bipVf

I’m all for OSINT research and totally get the need for researchers to protect their identities. But I would be very, very, very cautious when using OSINT research by a completely anonymous entity. High potential for abuse.Khalistan theory against protesting farmers was planted by Pakistan & boosted by PR wing of Pakistan’s armed forces. Once it took hold in India, RW "useful idiots" fell for it and gave it life. TV News piled on. @NidhiSuresh_ 🔥 #IndiaAgainstPropaganda https://t.co/7RTF3bipVf 42 🔗 @Memeghnad

42 🔗 @MemeghnadVarun Gandhi has reportedly served ‘a legal notice to a YouTube channel for spreading ‘fake news’ about him.’ [Financial Express]

He [Varun Gandhi] shared four different screen grabs of videos on YouTube which suggested that Gandhi may join the Congress and destroy BJP’s politics in Uttar Pradesh. All four different videos carried the same message that he was ready to jump the ship and that the BJP was in trouble.

And the trend content moderation through courts continues.

PTI reports on the Unnao case and police action on Twitter handles” [CNBC TV18]

An FIR has been lodged against eight Twitter handles for allegedly propagating fake news in connection with the death of two girls here last week, police said on Monday. The Twitter handles include news portal ’Mojo Story’, led by senior journalist Barkha Dutt who termed the FIR a case of brazen ”harassment and bullying”.

A former Congress MP was booked as well [TOI]. DigiPub spoke out against the FIR [TheNewsMinute]

An investigation by DFRLab highlighted that hashtags calling for violence were amplified by Pro-govt actors. Edition 29 touched upon this too. [Ayushman Kaul - DFR Lab]

Pranav Dixit reports that ‘Koo is filled with hate. But it’s all Kool, there are apolitical after all. [Buzzfeed News]

And while I am not a fan of drawing parallels between Koo and Parler - there is at least one (more?) thing in common. Early adopters appear to have increased activity levels… on Twitter. [Arshia Arya, Dibyendu Mishra, Joyojeet Pal]. The corresponding story for Parler wasn’t as data-driven though [Washington Post]