What is this? MisDisMal-Information (Misinformation, Disinformation and Malinformation) aims to track information disorder and the information ecosystem largely from an Indian perspective. It will also look at some global campaigns and research.

What this is not? A fact-check newsletter. There are organisations like Altnews, Boomlive, etc., who already do some great work. It may feature some of their fact-checks periodically.

Welcome to Edition 50 of MisDisMal-Information

In this edition:

Why we should have seen more context about the study which claimed India was the biggest source of Covid-related misinformation.

The role of religious and partisan identity in correcting belief in falsehoods.

Oh, look at that, a mini-milestone for MisDisMal-Information at 50 🍾 !

India, the biggest source of COVID-related misinformation?

On 15th September, TOI published a story titled “India world’s top source of misinfo on Covid-19: Study”. PTI’s similarly headlined wire story was picked up by multiple publications, including Business Standard, Livemint, The Week, MoneyControl, etc.

At the time, I incorrectly wrote that the study only tagged India as ‘most affected’ instead of calling it the largest source. But, a more thorough reading, later on, made it clear that the study did actually say that.

Wait, are you contesting that India could indeed be the largest source of misinformation?

Good question. It may or may not be. I have a few issues with the study, which I feel should have been addressed in its coverage rather than uncritically amplifying its assertions. I will adapt what I wrote on SochMuch 💭 (yes, that’s a thing 😂).

Here is a link to the study, whose abstract states:

This study analyzed 9,657 pieces of misinformation that originated in 138 countries and fact-checked by 94 organizations. Collected from Poynter Institute’s official website and following a quantitative content analysis method along with descriptive statistical analysis, this research produces some novel insights regarding COVID-19 misinformation. The findings show that India (15.94%), the US (9.74%), Brazil (8.57%), and Spain (8.03%) are the four most misinformation-affected countries.

The database that the abstract refers to tags a country to a fact-checked item if it is referenced, not only if it is a 'source'. As far as I can tell, it doesn't even attribute a country as a source. And since the study relied on scraping the database - we can assume the author(s) did not have any additional information that led to this kind of attribution:

We collected these data using Web Scraper, an automated scraping extension for web browsers (see more http://webscraper.io). In this automated web scraping, we extracted the claims of misinformation, fact-checkers, sources, dates, countries, types of claims, and explanations of misinformation.

In this example - both Brazil and India are referenced. However, the misleading information did not appear to originate in India and only referenced it. In my time tracking occurrences of vaccine hesitancy related keywords along with mentions of India, I’ve observed that India is frequently referenced in anti-vax narratives.

But there's more to consider about the database itself -

The database includes instances that are already "fact-checked".

The IFCN code has 92 active signatories, of which 15 are from India. And while they don't restrict themselves to addressing misleading information from India only, that likely forms a significant portion of their output. AFAIK, no other country has as many.

The 'CoronaVirusFacts' Alliance has 99 participants, and I counted 9/10 from India.

Outside the top 4, no other country in the top 10 has more than two fact-checking organisations that are signatories.

This means there is a possibility that the countries which were the "most-affected" also happened to be ones that were the most represented in the database because:

They were referenced more frequently than others.

They have more organisations dedicated to debunking misleading information.

None of this is to downplay the prevalence of misleading information in India's information ecosystem. In fact, it is to do with the role of a very significant actor in it - news media. This is important context that, I think, should have been included in any reporting on the topic. Otherwise, we're misstating the problem. And subsequently, likely to misdiagnose it.

In the information ecosystem where information is cheap, and knowledge is expensive (to quote Joan Donovan), providing this context is probably where the future value-add for journalistic efforts will lie. There’s a glut of disparate pieces of information. What’s required is to contextualise and simplify them (without oversimplifying).

P.S. This is not about any individual journalist or particular organisation. It is something I've frequently seen with reporting of academic studies across various formats, and around the world.

Correcting beliefs and the role of religion

Ok, now that you’ve been through my opening rant, let’s turn our attention to a recent study that investigated the role that religious framing can play in correcting belief in covid-related misinformation. It considered two categories: conspiracy theories and miracle cures/medical misinformation [Sumitra Badrinathan, Simon Chauchard - Working Paper]. What follows is mostly my understanding of the paper. To attribute any claims to the authors, I would recommend reading the paper itself.

Also, it is rather fortuitous that this paper came up one of my (many) feeds since I’ve been thinking even more about the role of identity since reading a recommendation list by Chris Bail on fivebooks.com [FiveBooks].

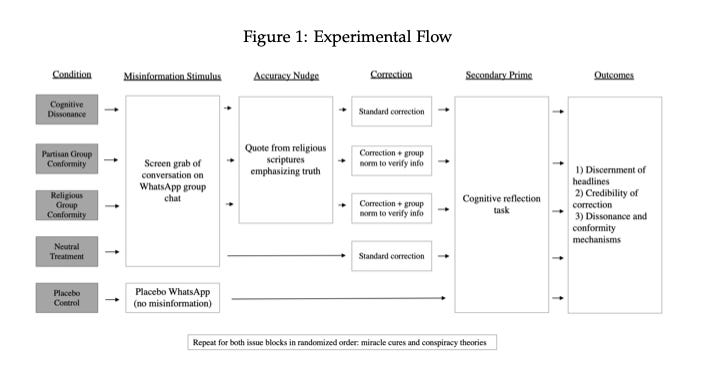

Now getting back to it. The authors investigate this in the context of 2 identities:

1) Political/Partisan: Support for the BJP (the ~1600 respondents were put into two blocks based on whether they supported the BJP or not - strongly supported, somewhat supported, somewhat opposed and strongly opposed).

2) Religious: Hindus (A degree of ‘religiosity’ was determined on a scale of 0 [low] to 1[high])

Each of these blocks was put through 5 conditions that corrected for cognitive dissonance, partisan group conformity, religious group conformity, as well as neutral and placebo conditions.

They identify two mechanisms for motivated reasoning to take hold:

We posit that motivated reasoning may affect individuals through two distinct mechanisms: cognitive dissonance with new information or social pressure to conform to group norms.

And the implications of which are (paraphrased):

1. If scientific information/corrections are 'incongruent' with long-held beliefs, it can cause cognitive dissonance. To eliminate this dissonance, 'highly religious individuals' may adopt the misinformation.

2. Belief in information 'incongruent with group beliefs' can lead to 'fear of alienation from the in-group'. The authors referred to this 'identity protective cognition', which is a form of motivated reasoning that results in increased pressure to hold/form 'group-congruent beliefs'

3. Corrections that reduce dissonance and pressure to conform may reduce the 'prevalence of these beliefs'.

Some of my take-aways from reading it:

Individuals who are ‘highly religious’, support the BJP are more likely to believe conspiracy theories and medical misinformation.

Two graphs on page 18 show that these seem to hold. Those who scored low on religiosity were significantly better at differentiating between true and false headlines. And of the 4 political identity groups, those who strongly supported the BJP were the least accurate. Notably, those who strongly opposed the BJP were also less accurate than the remaining 2 (somewhat supported and somewhat opposed) - and I wonder if there is something to draw on from there. For context, participants were asked to identify from a set of 12 headlines which were true and which were false. These 12 were two groups of 6 headlines related to conspiracies and medical misinformation (in each set, 4 were true and 2 were false).

Religious animosity (note: the authors used the term polarisation) also played a role as those who said that they would be upset about a friend’s inter-faith marriage were also less accurate than those who said they wouldn’t be upset.

Miracle cure misinformation was harder to correct, possibly because some belief in alternative cures, home remedies, etc. are pretty entrenched.

For conspiracy theories: all treatments improved the ability to accurately identify false and true headlines, especially in the cognitive dissonance treatment group (where a religious frame was used) and partisan conformity treatment.

For miracle cures: conformity treatments (religious and partisan) showed greater improvement. The cognitive dissonance treatment appeared to have no additional effect when compared with the neutral treatment group, where a standard correction was used (without religious frame or situating it in an in-group partisan/religious setting).

3 and 4 suggest that a different underlying belief-forming mechanism was at work and thus requiring different types of interventions/strategies to counter/correct.

There is a lot more nuance (and insights) in the paper itself so, once again, I recommend reading it.

Another recent paper on countering misinformation among individuals with low digital literacy in Pakistan noted that (from the abstract) [Ayesha Ali, Ihsan Ayyub Qazi - arXiv.org]:

We do not find a significant effect of video-based general educational messages about misinformation. However, when such messages are augmented with personalized feedback based on individuals’ past engagement with fake news, we find an improvement of 0.14 standard deviations in identifying fake news.

…

Our results suggest that educational interventions can enable information discernment but their effectiveness critically depends on how well their features and delivery are customized for the population of interest.

These are studies in different contexts, so I am not suggesting tacking on their conclusions serially. But they do add to a growing body of research that suggests we will need a targeted, patient, high-effort path to improving the state of the information ecosystem. There are no ‘miracle cures’ (see what I did there?), no single corporation (or breaking one up) or technological solution or law is going to fix this.

This means we’ll have to do the taxing, difficult work of understanding and interacting with those we disagree with and may even abhor (especially, them) even though the current incentives favour expressions of outrage and out-group animosity (see editions 48 and 46).

Prateek, I am one of those ‘low religiosity’ persuasion people, why should I care?

Good question. I am one of those “‘low religiosity’ persuasion people” too. But I think this religiosity aspect is crucial because, in the current Indian information ecosystem, you cannot escape religious identity. Whether it is through negative expression (islamophobia, accusations of ‘hinduphobia’, targeting religious minorities) or positive (expressions of solidarity). And whether we like to admit it or not, the radicalisation effects that Guy Schleffer, Benjamin Miller wrote about in The Political Effects of Social Media Platforms on Different Regime Types appear to be at play here [TNSR] (I wrote about this study in Takshashila’s Technopolitik #4).

Related: Since we’ve dealt with religious identity today, some links worth checking out:

Classical Ideas Podcast episode with Dr. Dheepa Sundaram on Social Media and Hinduism [link].

Islamophobia in Indian Media - Zainab Sikander [link]