Of Spaghetti on the wall, one nation one internet, fact(un)checked

MisDisMal-Information Edition 41

What is this? MisDisMal-Information (Misinformation, Disinformation and Malinformation) aims to track information disorder and the information ecosystem largely from an Indian perspective. It will also look at some global campaigns and research.

What this is not? A fact-check newsletter. There are organisations like Altnews, Boomlive, etc., who already do some great work. It may feature some of their fact-checks periodically.

Welcome to Edition 41 of MisDisMal-Information

Around 100 years ago, in early April in edition 35 - I had promised you a less ‘doom and gloom’y edition. I may not deliver fully on that, but for a change, I’ll start off with something positive.

Not spaghetti on the wall

In a study titled ‘Combining interventions to reduce the spread of viral misinformation’, a team comprising Joseph B. Bak-Coleman, Ian Kennedy, Morgan Wack, Andrew Beers, Joseph S Schafer, Emma S. Spiro, Kate Starbird and Jevin D. West attempted to compare individual interventions to curb the spread of false information (deplatforming, virality circuit-breakers, etc.) with a combination of different types of interventions. The results?

we reveal that commonly proposed interventions–including removal of content, virality circuit breakers, nudges, and account banning—are unlikely to be effective in isolation without extreme censorship. However, our framework demonstrates that a combined approach can achieve a substantial (~50%) reduction in the prevalence of misinformation. Our results challenge claims that combating misinformation will require new ideas or high costs to user expression. Instead, we highlight a practical path forward as misinformation online continues to threaten vaccination efforts, equity, and democratic processes around the globe.

Let’s dig in a little. Here’s what happened when they looked at individual interventions:

Content removal: With the assumption that platforms have the ability to perfectly remove all instances of a particular piece of content, outright removal resulted in ~93% (median) reduction in tweets, replies, quote-tweets and retweets) if done within 30%. And ~50% reduction if done after a 4-hour delay.

Virality circuit breakers: A 10% reduction in virality implemented after 4 hours can lead to a 33% reduction in the spread of false information.

Nudges + reduced reach: I’ll quote here ‘Nudges that reduce sharing by 5, 10, 20, and 40% result(ed) in a 6.6, 12.4, 22.6, and 38.9% reduction in cumulative 164 engagement, respectively’.

Account bans:

For ~1500 accounts removed in early 2021, engagement with false information dropped by 12%.

Then they considered a 3 strikes scenario. For verified accounts, this resulted in a ~8% reduction in engagement. It appeared to make a significant difference when the threshold for removal was set at having 10K followers.

With combination interventions, they considered 2 levels.

Modest: ~36% reduction in the volume of misinformation.

Reducing Virality: Applied to 5% of the content, reducing virality by 10% and enforced after 2 hours.

20% of this content was removed after 4 hours.

Nudges resulted in 10% less sharing of false information.

3 strikes rule for account bans applied to those with >100K followers.

Aggressive: ~49% reduction

Reducing Virality: Applied to 10% of the content, reducing virality by 20% and enforced after 1 hour.

20% of this content was removed after 2 hours. This isn’t explicitly mentioned, I’ve inferred this based on how it was worded.

Nudges resulted in 20% less sharing of false information.

3 strikes rule for account bans applied to those with >50K followers.

I’ve obviously simplified heavily here, so as usual, I will recommend reading the actual paper too. Nevertheless, what the analysis (relying on simulations) does point to is the need to explore using multiple kinds of interventions simultaneously. I will caution that any such combinations should be tested, and the results should be published before we start throwing spaghetti on the wall to see what sticks. Because experiences of many around the world tell us that it is the marginalised and vulnerable who are the most adversely impacted by arbitrary steps.

Related: Facebook announced it would notify people if a page they visit has repeatedly shared false information, reduce the reach of people who share false information repeatedly (beyond the offending posts, which it already claimed to do), as well a redesigned prompt for when people are about to post debunked false claims. Notably, it didn’t say how many strikes it would take to reduce the reach of all posts by a user. What are the odds we’ll this being applied to any prominent Indian accounts any time soon?

One earth one internet v/s One nation one internet

Whether it is the Indian state's renewed face-off with Twitter, or Russian threats to throttle Google's traffic, or even Canada doing an ‘atmanirbhar’ internet (with a bill that wants to prioritise Canadian content) - such events always raise the questions about models of internet governance and power balance (or imbalance) between various participants.

In 'Four Internets' Kieron O’Hara and Wendy Hall identified 5 types of digital governance models; Silicon Valley's Open Internet driven by technology; Brussel's Bourgeois Internet with its focus on peace, prosperity and cohesion through rules; Beijing's Authoritarian Internet with its emphasis on control and surveillance; DC's Commercial Internet which places the interest of private actors at the centre. Moscow's spoiler model exploiting an open, decentralised internet featured as an addendum.

18 months later, In India, Jio, and the Four Internets, Ben Thompson highlights an 'increased splintering in the non-China model' with a 'U.S. Model' (similar to a combination of O'Hara and Hall's open and commercial internets), European model (similar to bourgeois internet), and an Indian model characterised by 'unencumbered' foreign participation in 'digital goods' and a 'tighter leash' over the physical layer. Jack Balkin's 2018 essay 'Free Speech is a triangle' depicted dyadic interactions between states, corporations and societies and their interactions via an inverted triangle. The ability to speak is an outcome of the power struggle between these participants.

A recent paper by Demos provides a framework to visualise these models and relative power. It identifies four powers that will determine rules for and shape the internet - states, corporations, individuals and machines, with each of them enjoying some degree of control/power.

The reality of the internet as a 'network of networks' and a 'borderless entity' also implies each model and its four powers will interact with other models/powers and influence them. Such models are not a perfect representation of reality but provide useful frameworks to think about the future of the internet.

Fact (un) checked

In a public post on her newsletter ‘Checking Facts Even If One Can't’, Zeynep Tufekci writes, in the context of fact-checks around lab-leak theory last year.

The cluster of “lab leak” theories itself needs unpacking, as it includes claims that seem to range from plausible but uncertain to what I’d consider unlikely and distracting. But nonetheless, it’s useful to walk through one example of a “fact-check” from Politifact from last year that has recently been “archived”:

…

An honest evaluation in September 2020—before the WHO investigative trip and everything that has been revealed since—would be something along the lines of this: “We don’t know and there are a lot of conflicting opinions about this, and the evidence base is incomplete and different groups of scientists have different views. We are not in a position to assign plausibility levels because that’s what scientific debate is about and we are not scientists or investigative journalists, and we are supposed to fact-check things that are clear facts, not resolve complex scientific debates taking place in a politically-charged landscape”.

I’ve been thinking of this extensively in the context of the claims attributed to Luc Montagnier that those who have taken the vaccine will die within 2 years. Now, one part of debunking this claim has been clarifying that the statement was misattributed. But let’s suppose he did actually say that. How do you debunk the ‘2 years’ part of the claim since no one actually received the vaccine more than 2 years ago? This is not analogous to the example in Zeynep Tufekci’s post, of course. The question I am trying to get to is how to do you square the need for authoritative public messaging with the importance of conveying the underlying complexity.

Related: “Facebook will no longer take down posts claiming that Covid-19 was man-made or manufactured” [Cristiano Lima - Politico].

Meanwhile in India

GoI’s ‘feuds’ with Twitter, Facebook and Whatsapp are all escalating and will likely have evolved by the time I write this edition and hits your inboxes.

On the Twitter front, both the Delhi Police [ANI Twitter thread] and MEITY (GOI_Meity tweet) have put out strongly (and oddly) worded press releases after Twitter’s statement earlier on Thursday, which among other things, expressed concern for the safety of its employees [Soumyarendra Barik-Entrackr]. Remember Vittoria Elliot’s story on ‘hostage-taking laws’ from edition 39?

On the Whatsapp, Facebook front, there are few better sources to follow for the traceability debate in India than Aditi Agrawal’s reportage. Her most recent piece (at the time of writing) based on the petitions filed by Facebook and Whatsapp is also a must-read.

Ok, now back to the stuff I had planned for this section.

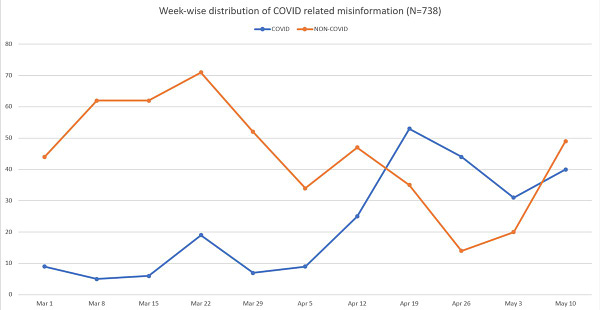

In edition 1, I wrote about an analysis of patterns in COVID-19 misinformation in India by Syeda Zainab Akbar, Divyanshu Kukreti, Somya Sagarika and Joyojeet Pal (go back and read it, if you haven’t). Now, Syeda Zainab Akbar and Joyojeet Pal have put out another analysis based on the 2nd wave.

We studied online misinformation in India during the second wave of the COVID crisis. We find a dramatic rise of 'utilitarian' misinformation, purporting to 'deal with' the issue, compared with blame-oriented misinformation, purporting to find 'those at fault' in 2020. (1/6)

We studied online misinformation in India during the second wave of the COVID crisis. We find a dramatic rise of 'utilitarian' misinformation, purporting to 'deal with' the issue, compared with blame-oriented misinformation, purporting to find 'those at fault' in 2020. (1/6)

Read this along with Meghna Rao’s RestofWorld piece on misinformation in a family whatsapp group.

FirstDraft’s Carlotta Dotto and Lucy Swinnen investigated islamophobic tweets from India in the context of social media conversations around Palestinians. This is certainly a trend, I had done a preliminary analysis of a similar phenomenon around violence in Sweden and Norway in September 2020 [TheWire], and noticed a similar pattern when the BLM protests started out around a year ago [Edition 6]. Sections of ‘Indian Twitter’ just seem to be waiting for a trigger to jump on.

IndianExpress with a round-up of Mr. Ramdev’s ‘controversial remarks’. Related: Alishan Jafri on the contrast between GoI’s attempts to curb the usage of ‘Indian variant’ v/s inaction in response to other narratives [TheWire]

2 arrests that received some coverage:

A YouTuber from Ludhiana was handed over to the Arunachal Pradesh police for ‘racial remarks against a Congress MLA from Arunachal Pradesh and for bearing ill will towards the people of the state.’

Yasser Arafat for posting a ‘a pro-Palestine image and comment on his social media page’.

Arafat’s post on his Facebook page, which he calls Azamgarh Express and on which he usually posts local news that is sometimes combined with his own views, had simply noted that in Gaza the coming Friday, every house and every vehicle would fly the Palestinian flag.

But some readers among the Azamgarh Express page’s 17 lakh followers appeared to have misread the post as an appeal by Arafat for every Muslim in Azamgarh to raise the Palestinian flag in their home and on their vehicle on the coming Friday.

Around the world

In the US, ‘‘Rogue’ Commerce unit scanned social media for Census disinformation’. [KimLyons - TheVerge]

From Russia, ‘YouTube feels heat as Russia ramps up “digital sovereignty” drive’. [Max Seddon - Financial Times via arsTechnica]

In France, influencers were offered money to spread disinformation about vaccines. [Hebe Campbell - EuroNews]. Subsequent reporting [Charlie Haynes - Twitter] suggested that the company, Fazze, had approached German influencers too.

Also, see this thread

A report by DoubleThink on China’s information operations during the 2020 Taiwan elections.

“Online Hate Becomes Real-World Violence In Israel–Palestine”. [Jane Lytvynenko - BuzzfeedNews]

“When Zionism is Antisemitic”. [Becca Lewis - Patreon]

Laos’ Ministry of Public Security has ordered the creation of a taskforce to track and combat ‘fake news’ [AccessNow]. For Edition 21 - Of the state and States of Information Disorder, which had referenced a report by the Library of Congress which listed at least 5 countries as having relied on a task force at some point: Australia, Denmark, France, Russia and Canada.

Speaking of Canada, I had referenced this earlier in the edition, but wanted to include this specifically in this section too. “Canada Wants YouTube, TikTok to Prioritize Canadian Content”. [Paul Viera - WSJ]