Of Bricks in the firewall, UnTrend, I C BATMAN and the Information Disorder World Cup.

MisDisMal-Information Edition 17

What is this? This newsletter aims to track information disorder largely from an Indian perspective. It will also look at some global campaigns and research.

What this is not? A fact-check newsletter. There are organisations like Altnews, Boomlive, etc. who already do some great work. It may feature some of their fact-checks periodically.

Welcome to Edition 17 of MisDisMal-Information

Hate is Trending, Trends are Not

Hey Prateek - you spoke about hate just last week!

Well, sorry, I did tell you it was unlimited, didn’t I? Unlike 1984’s 2-minute Hate 2020’s is the 24-hours a day, 7-days a week variety (I cannot claim originality here, this was inspired by Maelle Gavet’s piece in FastCompany).

Over the weekend, there were violent protests in Malmo, Sweden. Anwesha Mitra wrote an explainer in The Free Press Journal. I won’t go into details of the ‘on-the-ground’ politics because I am far from being an any sort of expert in Swedish or Norwegian politics. What I did see though was that as these events were taking place - terms like SwedenRiots, WeAreWithSweden and NorwayRiots were trending on Twitter in India. In the early phases of this, as I was trying to find authoritative news sources to figure out what was happening, even searches on google/bing pointed to mainly Indian publications (yes, I use Bing sometimes).

Of course, on the face of it, there is nothing wrong with this. Twitter is a global platform, the world is global village (isn’t that the phrase?), and India is a free country (It is, no?). There is nothing to say that somebody can’t share their opinion(s) on something that happens in different countries. What they choose to say, has some relevance though.

From when I was looking into Twitter activity around the time BLM/George Floyd protests started in Edition 6:

like USARiots and USAonFire. In fact, on both these hashtags, of the accounts that actually publish location information on their profiles, the top ones were from India. Even the accounts with most number of tweets using the hashtag appears to be be from India. Now, this is very different from saying that most or even a substantial portion of the activity was from India. It was still higher than I expected.

See? Nothing wrong, nothing even out of the ordinary. Also, from earlier in the same paragraph:

I spent some time looking at the hashtag USA riots, because I noticed a similarity in anti-protest narratives from HK, Anti-CAA, J&K and now George Floyd, “rioters”, “looters” etc. I was also surprised to see a lot of RW seemingly Indian (or Indian-origin) handles active on hashtags like USARiots and USAonFire ….

That pattern persisted in the Sweden, Norway case too. I wrote about it for TheWire, but here’s the gist.

Using a combination of Hoaxy, Twitter Search and APIs I tried to find what kind of messages gained traction.

Hoaxy clusters indicated that the accounts which a got a lot of traction typically associate themselves with anti-minority content.

It appeared that a substantial amount of the activity was driven by accounts that listed themselves as belonging to locations in India.

Hashtag clouds indicated many references to Bangalore violence/riots, Delhi Riots, Kashmir, Poland, Islam, Jihad and ‘Just Saying’

The top 1% of tweets accounted for 55-72% of the activity.

The content of the top 1% was largely anti-minority in India.

There was 1 account that purportedly belonged to a caucasian Swedish citizen (which got a lot of engagement on one of the hashtags) while one of its first tweets was in Hindi/Urdu in Roman script.

I’ll include the Hoaxy clusters and tag clouds here, but all other content is in the article.

Sweden Riots

WeAreWithSweden

NorwayRiots

It seems like it only last week that I was talking about Dangerous Speech (that’s because it was) so I won’t belabour the point. I’ll just include the definition: *‘any form of expression (e.g. speech, text, or images) that can increase the risk that its audience will condone or commit violence against members of another group.’*

And as Facebook, finally did ban(paywall) T. Raja Singh, Surabhi Agarwal, writes for ET that officials are debating India specific content moderation rules around ‘hate-speech’.

“Whether something comes under hate speech has to be defined by a consistent policy and has to have neutrality of ideology,” the official said.

…

“This debate around hate speech is skewed because what constitutes hate speech or otherwise will be determined by India’s rules and regulations and constitutional frameworks, not by community standards of a particular social media platform. It also needs to apply uniformly,” Malviya had said.

Now, that brings me to another point - hashtags and trends. Hashtags/Trending/Most Viewed type of sections enable people identify what is popular and also to coalesce around topics. Except, these have always been possible to game. All the way back in 2010, a writer at DailyBeast demonstrated that it took ~1300 mails to break into New York Times’ Most Viewed section. In 2017, Pranav Dixit wrote about the use of Twitter Trends for political propaganda in India. And Kiran Garimela tweeted recently about some upcoming research

And it just so happens, that hate is something we love to coalesce around too. This is why we can’t have nice things.

Katie Notopoulos’ tweet sums it up:

And now, with the U.S. elections looming, there are calls to ‘Untrend’ October. Sacha Baron Cohen got into the act, and probably also managed to super-spread a claim not backed by evidence , as Darius Kazemi points out.

Twitter, meanwhile has said that it will add more context to Trends. That should be… fun?

What? You want more hate?

Fine then, see/listen to:

OECD’s report on TVEC (terrorist and violent extremist content) among the top 50 content-sharing services (report makes no reference to India)

Emma LLanso talk about on GIFCT on The LawFare Podcast.

Google is considering pulling some fediverse apps from the Play Store because they can be used to access servers where users post ‘hate content’.

In theory, this isn’t much different than pulling a web browser for the potential to visit hate websites. The servers hosting the content are responsible, Eugen said, not the clients you use to access those servers.

Use of gamification by IS.

Enough now!

Another brick in the firewall

It happened, Bandemic got a sequel (Bandemic 2 - The one with PUBG). MEITY announced that it was going to block another 118 apps - that happened to be Chinese. Why? ‘in view of information available they are engaged in activities which is prejudicial to sovereignty and integrity of India, defence of India, security of state and public order’ of course.

There was also a new-ish term being associated with it - ‘Internet Nationalism’.

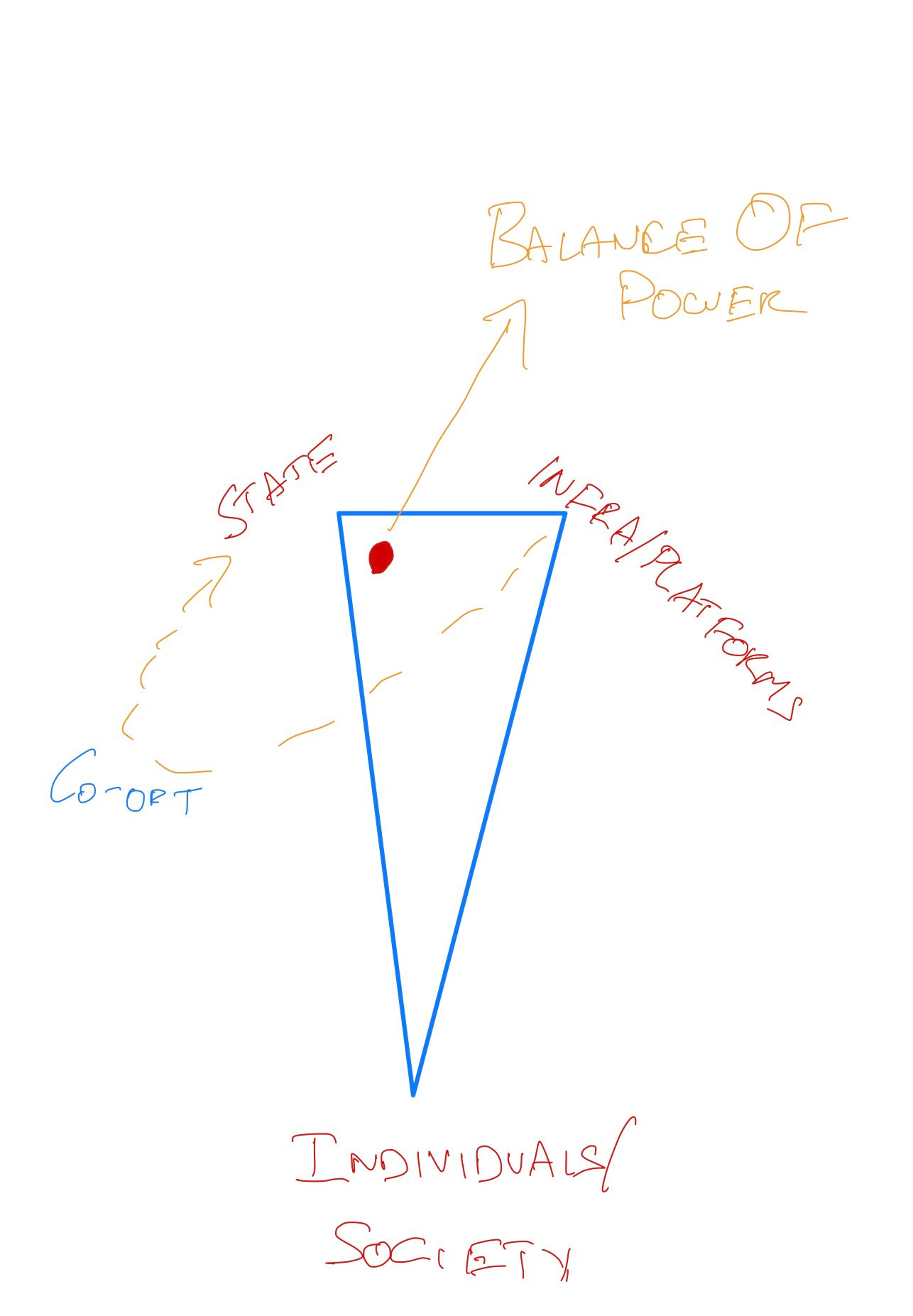

The way I see it, whatever you call it - it is a model of internet governance that moves the centre of power further away from individuals and society. Normally, the triangle would be equliateral, but in this model - that doesn’t make sense.

And I know, most people probably don’t care about any app other than PUBG - but here’s a chart of the number of apps affected by developer as per the play store (the ones I couldn’t find were attributed to ‘?’)

I already doom-propheted in Edition 9, so I won’t repeat myself when I can just ‘copypasta’ ok, technically it isn’t copy pasta since it hasn’t left the newsletter 😂.

Worried yet? No? Ok, let me indulge in some ”doom propheting” Combine this with growing concerns around election interference, cross-border information warfare, domestic influence operations leading us towards ‘fake news’ regulation, flirting with mandatory verification/registration of users and there’s one likely path. A Splinternet

Oh, and you know who else is on a banning-spree? Our next-door neighbour: for very different reasons though. Also, the Balochistan government has placed ‘placed a ban on websites and social media pages used to spread disinformation against the provincial government and their employees’.

A Twitter user even posted something hilarious about a cross-border contest, but since it was deleted - I can’t use it here.

*P.S. I know there was another set of 47 apps that were banned, but since they were largely clones - I am treating that more as a director’s cut DVD than a sequel, so Bandemic 2 still stands. Open Internet? We’ll just have to wait and see about that.*

Of course, no section referring to a splinternet is complete without the country that may have managed to nail jello to a wall, so here goes:

Yaqui Wang on the effects the Great Firewall may have had on the outlook of an entire generation (*emphasis added*).

That has changed sharply in recent years as a crackdown on the internet and civil society has become more thorough and sophisticated—and the government’s messaging has grown more nationalistic.

While nationalistic sentiment among Chinese youth has always been strong in certain areas of national security—especially when it concerns “sovereignty” or territorial issues, such as the Senkaku Islands, Taiwan and Tibet—in recent years it has increasingly spread to discussions of culture, technology and even medicine. *Now young online Chinese, once conduits for new ideas that challenge the power structure, are increasingly part of Beijing’s defense operation.*

Iris Deng in SCMP on the CyberSpace Administrations rumour reporting app.

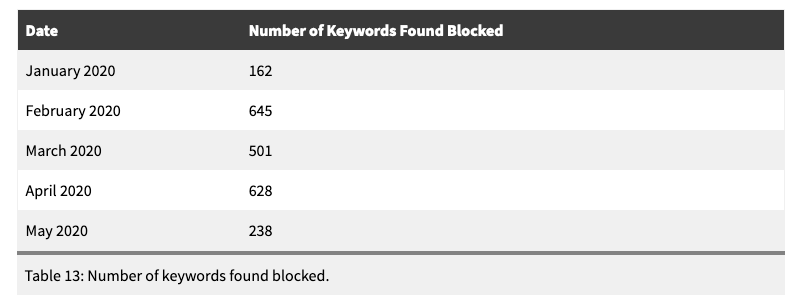

Citizen Lab released Censored Contagion II (here is I) - a report on how information continues to be controlled on Chinese Social Media think back to the model. They present a timeline of keywords being censored on WeChat.

In total we found 2,174 keywords blocked. The Table below provides a breakdown of censored keywords found within each month of our testing period.

And from their conclusion:

The themes of censored content show areas of sensitivity for the Chinese government from how the virus is contained in China, international diplomacy, and ongoing tensions between the U.S. and China. Outside of this political content, we also found censorship of health-related information, including the number of confirmed COVID-19 cases and deaths, as well as references to personal protective equipment supplies and medical facilities.

Inauthentic Coordinated Behaviour/Activity To MANipulate?-Narratives On Platforms Endlessly

Also known as…

I-C-BATMAN?-NOPE

What? It is better than CIB…

Facebook released its August Coordinated Inauthentic Behaviour report for August which covered networks operating from:

Russia - 13 Accounts, 2 Pages. Targeting - US, UK, Algeria, Egypt + English speaking countries in MENA.

US - 55 accounts, 42 Pages, 36 IG accounts. Targeting Venezuela, Mexico and Bolivia.

Pakistan - 453 Accounts, 103 Pages, 78 Groups and 107 IG accounts. Targeting Pakistan and India.

Twitter too took action against some Russia-linked accounts and said that it will be blocking links from PeaceData.

Stanford Internet Observatory published a report “Reporting for Duty” on the Pakistan-based network.

The network engaged mainly in mass-reporting of accounts that were critical of Pakistani State Machinery and Islam, or linked to minority communities. It used a chrome extension to facilitate this process. What is unclear is how Facebook concluded mass-reporting was ‘coordinated’ since see multiple open calls to report/boycott accounts every day. Was the use of an extension the only factor? Because this will have implications as Facebook gets deeper into politically sticky territories with such interventions in the future

Pages posted Pakistani nationalist content and were also critical of the BJP and Narendra Modi. Curiously, there were fan-pages dedicated to praising the Indian Army. Some content was pro-Khalistan. There weren’t complete specifics about how much content there was under each country, nor its reach by these groups. But based some data from Crowdtangle included in the report, these were the pages with the most of number of posts

Mujahid Markhor

Min Pakistan Ka Malik Hoon

Indian Air Force

Indian Army Lovers

Ratnavalli Talari

6 groups had over 20K users (4 of these were private), and based on names - they appear to be 4 appear to be related to Indian armed forces, 1 about GK/Math/Current affairs and 1 pertaining to Khalistan.

Meanwhile in India

Ok, not yet in India - The Official twitter account of the Permanent Mission of India to UN had this to say about the Stanford IO report.

Ok, I guess we didn’t read the Feb CIB report or this September 2019 report by Oxford Internet Institute.

No Face-to-show-book

Ever since the WSJ story, there has been a lot of reporting on Facebook in India.

Karisma Mehrotra writes about pages flagged by BJP to Facebook and how Public Policy in social media works.

Venkat Ananth on Facebook’s ad policies being circumvented.

Sandhya Sharma on what Facebook may need to do given the increased scrutiny. (paywall)

TOI’s strong-ish editorial

Social harmony, national security, democracy itself are on the line here if new checks are not put in place on this gargantuan and parasitic platform. But this needs bipartisan investigation and policymaking. Given the obvious dangers of regulation by the executive, regulation of the social media giants is best done under the auspices of a parliamentary committee with representation from both ruling and opposition parties.

You’ve got mail

Psst.. you know who seems to flying under the radar… for now? A tiny blue bird. Maybe there are…

Thou shalt not ‘spread fake news’

An FIR was registered against a BJP MP in West Bengal for tweeting misleading information about an attach on a temple.

In Andhra, a journalist was detained, questioned and the let off “for spreading a fake news against a YSR Congress MP” stating that he was in talks with the BJP.

In Kerala, the state government wants to file a complaint with the Press Council of India for the way some newspapers reported a fire in the state secretariat. Some moral gymnastics may be required.

And what looks to be bizarre style of fact-checking from AyushmanNHA -

Around The World

Thou shalt not ‘spread fake news’ - international edition

In Egypt, (Syndicated Washington Post story) Bahey eldin Hassan was sentenced to 15 years in prison for criticising the government.

Hassan was prosecuted under both the penal code and a draconian new cybercrimes law that has been condemned by media and Internet freedom campaigners as a severe obstacle to freedom of expression. The law criminalizes insulting state institutions, such as the judiciary, and includes a provision outlawing the dissemination of “fake news” — a term that has become popular with autocrats around the world since the emergence of Donald Trump as a political force in the United States. Hassan’s comments were characterized as “insulting the judiciary,” and his criticism of the regime’s failure to hold anyone accountable for the 2016 disappearance, brutal torture and killing of Italian graduate student Giulio Regeni was found to violate the provision against “spreading fake news.”

Swaziland’s Ministry of Information, Communication and Technology is introducing a law which carries a 10-year prison sentence for ‘publishing fake news’.

In Pakistan, journalists received death threats after their reporting was labelled ‘fake news’.

Wired has an excerpt from “Blood and Oil: Mohammed bin Salman’s Ruthless Quest for Global Power” about attempts to silence critics on Twitter.

And more from Wired - Covid Is Accelerating a Global Censorship Crisis. Great, thanks pandemic.

Laurence Blair for Rest Of World about a Peruvian village. 5G conspiracy and engineers on a maintenance job.

Fool me once

A Reuters investigation about PeaceData (remember Twitter from I-C-BATMAN?NOPE. Ok, maybe that acronym is a little too long)

Acting on a tip from the FBI, Facebook and Twitter said on Tuesday they had identified Peace Data as the center of a Russian political influence campaign targeting left-wing voters in the United States, Britain and other countries.

The website succeeded in tricking and hiring freelance journalists to write articles about topics including the U.S. presidential election, the coronavirus pandemic and alleged Western war crimes, Facebook said.

BalkanInsight on the 2-way disinformation flow between anonymous sites/social networks and mainstream media.

“it seems that the motives for producing and distributing disinformation, anonymously or not, are usually associated with interest groups who want to compromise opponents or to “satisfy the electorate’s hunger for new and increasingly ridiculous nationalist articles – articles that the readership does not fully believe, but can no longer do without because nationalism and lies have become a part of its identity”.

A claim that … information was hacked, turned out to false. But it didn’t stop people from believing it anyway. Turns out, the information wasn’t really confidential to begin with.

The kind of data included in the leak is either publicly available in much of the United States or can be accessed via Freedom of Information requests. In some states, such as Florida, voter registration and voting history are by law public record.

Information Disorder World-Cup

Sorry, did I say Information Disorder World-Cup? I meant to say US elections. I should confess that I read this term elsewhere, but can’t remember where, and now I am shamelessly appropriating it

Shayan Sardarizadeh will be tracking weekly engagements:

Shireen Mitchell onDisinformation and Digital Voter Suppression

Facebook work with 17 independent researchers to study its impact on the elections.

Facebook has helped fight interference in more than 200 elections since 2017 and reduced fake news on its platform by more than 50%, according to independent studies.

Facebook will also stop accepting political ads 7 days before the US elections. But as this headline, aptly points out - older ads with lies may be ok.

And..

Facebook also put out an updated about how it is preparing for elections in Myanmar.

Preventing Hate Speech

Enforcing ‘Paid for by’ disclaimers on ads.

Verifying Pages

Limited spread of misinformation - the interesting bit here was a reference to an Image Context reshare product which would warn users when they try to share images over a year old etc.

Forwarding limits on Messenger (5 at a time)

Third-Party Fact-Checking

To me, the Image Context, seemed to be the only new thing here. Everything listed here - they will have to do in every country they have a presence in (if they aren’t already).

The Unbearable trade-offs of being… a content moderator

To clarify, I am not referring to people who actually moderate content. I am referring to corporations whose very essence of existence is content moderation. And you know, because, corporations are…people (is it still ok to say that, Mr. Romney?)

And if you go by the paper linked in the tweet:

Successful online speech governance is not an end-point to be arrived at, but an ongoing project of iteration, calibration and explanation based on changing rules, norms and technical capacity.

So, yeah, it is never going to be perfect and there will always be trade-offs. See, that’s easy enough to accept isn’t it.

Of course, they don’t help their cause when they take steps that will make the information disorder problem worse. As Facebook is likely to do if it follows through on its threat to ban news platforms in Australia.

Then, there’s the problem of ‘deepfakes’. A study by Democracy Reporting International summarised Platform positions on Manipulated Media. Notably, TikTok and Pinterest do not seem to have explicit policies addressing them. I should add though, just because the others have a policy - doesn’t necessarily mean that they are able to address the problem. It is, at best, a signal that they are thinking about it. Whether that comes from a PR perspective, or wanting to solve the problem (or even both), is something we’ll find out later.

*Long Aside: Microsoft announced bunch of measures to counter deepfakes. 2 technologies were highlighted

Microsoft Video Authenticator: Which will analyse a still photo/video to arrive at a confidence score of whether it is manipulated or not.

Another technology that relies on content creators inserting hashes into content - and then relying a client side component (they gave the example of a browser plugin but it could potentially be part of an app etc.) that will basically validate the hash value.*

And just in case I gave you some sense of optimism, let me knock that out with - what can social media platforms do with government-sponsored information disorder.

Study Corner

Mike Wagner’s thread on a new study (not open-access, which is why I’m using the twitter thread) - The Value of Not Knowing. Seems to imply that being uninformed is better than being misinformed - as far as chances of being re-informed(?) are concerned.

A study on how warnings improve the odds of correction.

providing individuals with a simple warning about the threat of misinformation significantly reduces the misinformation effect, regardless of whether warnings are provided proactively (before exposure to misinformation) or retroactively (after exposure to misinformation).

Not quite a study, but still worth exploring. Facebook partially documents its content recommendation system.